Project Goals

Main goal of the project was to write an app that will automatically handle updates on Gentoo Linux systems and send notifications with update summaries. More specifically, I wanted to:

- Simplify the update process for beginners, offering a simpler one-click method.

- Minimize time experienced users spend on routine update tasks, decreasing their workload.

- Ensure systems remain secure and regularly updated with minimal manual intervention.

- Keep users informed of the updates and changes.

- Improve the overall Gentoo Linux user experience.

Progress

Here is a summary of what was done every week with links to my blog posts.

Week 1

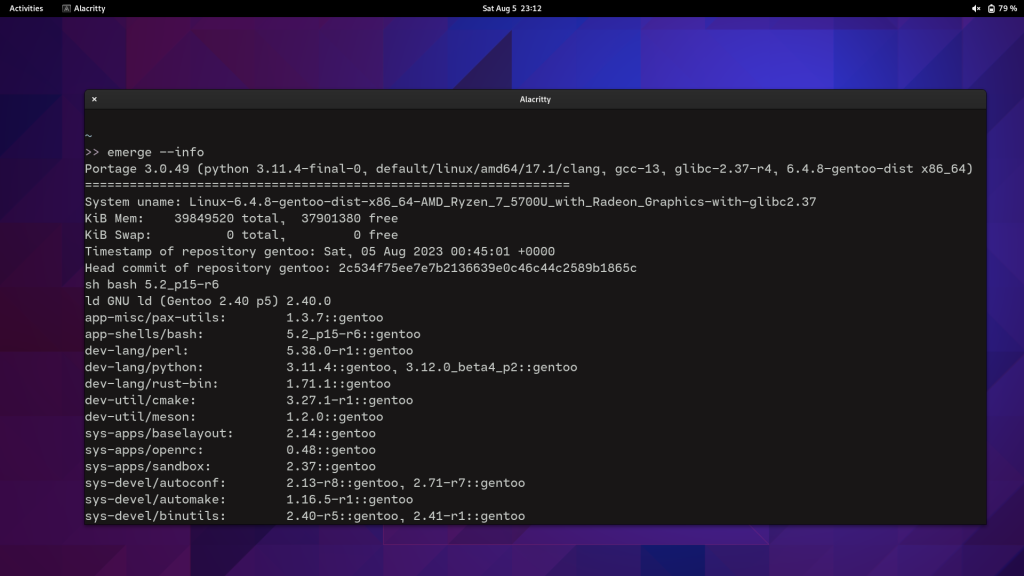

Basic system updater is ready. Also prepared a Docker Compose file to run tests in containers. Available functionality:

- update security patches

- update @world

- merge changed configuration files

- restart updated services

- do a post-update clean up

- read elogs

- read news

Links:

Week 2

Packaged Python code, created an ebuild and a GitHub Actions workflow that publishes package to PyPI when commit is tagged.

Links:

Week 3

Fixed issue #7 and answered to issue #8 and fixed bug 908308. Added USE flags to manage dependencies. Improve Bash code stability.

Links:

Week 4

Fixed errors in ebuild, replaced USE flags with optfeature for dependency management. Wrote a blog post to introduce my app and posted it on forums. Fixed a bug in --args flag.

Links:

Week 5

Received some feedback from forums. Coded much of the parser (--report). Improved container testing environment.

Links:

- Improved dockerfiles

Weeks 6 and 7

Completed parser (--report). Also added disk usage calculation before and after the update. Available functionality:

- If the update was successful, report will show:

- updated package names

- package versions in the format “old -> new”

- USE flags of those packages

- disk usage before and after the update

- If the emerge pretend has failed, report will show:

- error type (for now only supports ‘blocked packages’ error)

- error details (for blocked package it will show problematic packages)

Links:

Week 8

Add 2 notification methods (--send-reports) – IRC bot and emails via sendgrid.

Links:

Week 9-10

Improved CLI argument handling. Experimented with different mobile app UI layouts and backend options. Fixed issue #17. Started working on mobile app UI, decided to use Firebase for backend.

Links:

Week 11-12

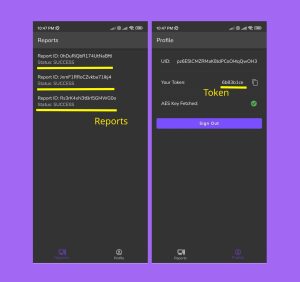

Completed mobile app (UI + backend). Created a plan to migrate to a custom self-hosted backend based on Django+MongoDB+Nginx in the future. Added --send-reports mobile option to CLI. Available functionality:

- UI

- Login screen: Anonymous login

- Reports screen: Receive and view reports send from CLI app.

- Profile screen: View token, user ID and Sign Out button.

- Backend

- Create anonymous users (Cloud Functions)

- Create user tokens (Cloud Functions)

- Receive tokens in https requests, verify them, and route to users (Cloud Functions)

- Send push notifications (FCM)

- Secure database access with Firestore security rules

Link:

- Pull requests: #18

- Mobile app repository

Final week

Added token encryption with Cloud Functions. Packaged mobile app with Github Actions and published to Google Play Store. Recorded a demo video and wrote gentoo_update User Guide that covers both CLI and mobile app.

Links:

- Demo video

- gentoo_update User Guide

- Packaging Github Actions workflow

- Google Play link

- Release page

Project Status

I would say I’m very satisfied with the current state of the project. Almost all tasks were completed from the proposal, and there is a product that can already be used. To summarize, here is a list of deliverables:

- Source code for gentoo_update CLI app

- gentoo_update CLI app ebuild in GURU repository

- gentoo_update CLI app package in PyPi

- Source code for mobile app

- Mobile app for Andoid in APK

- Mobile app for Android in Google Play

Future Improvements

I plan to add a lot more features to both CLI and mobile apps. Full feature lists can be found in readme’s of both repositories:

Final Thoughts

These 12 weeks felt like a hackathon, where I had to learn new technologies very quickly and create something that works very fast. I faced many challenges and acquired a range of new skills.

Over the course of this project, I coded both Linux CLI applications using Python and Bash, and mobile apps with Flutter and Firebase. To maintain the quality of my work, I tested the code in Docker containers, virtual machines and physical hardware. Additionally, I built and deployed CI/CD pipelines with GitHub Actions to automate packaging. Beyond the technical side, I engaged actively with Gentoo community, utilizing IRC chats and forums. Through these platforms, I addressed and resolved issues on both GitHub and Gentoo Bugs, enriching my understanding and refining my skills.

I also would like to thank my mentor, Andrey Falko, for all his help and support. I wouldn’t have been able to finish this project without his guidance.

In addition, I want to thank Google for providing such a generous opportunity for open source developers to work on bringing forth innovation.

Lastly, I am grateful to Gentoo community for the feedback that’s helped me to improve the project immensely.